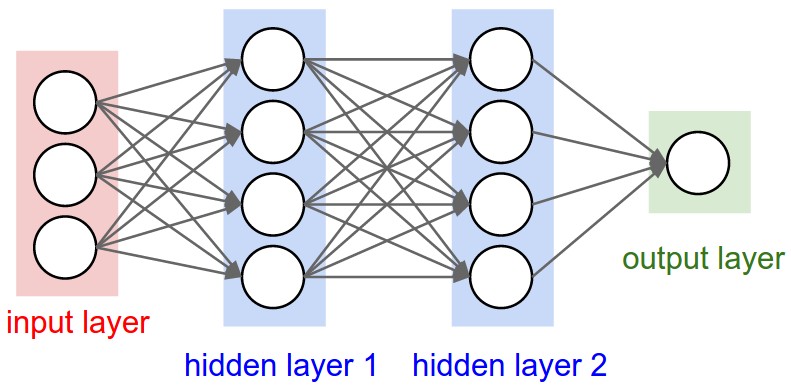

Deep learning is the engine behind current applications of artificial intelligence (most notably in image and speech recognition), and is really neural networks on steroids. Goodfellow et al, in their book Deep Learning, say that artificial neural networks (ANNs) is “one of the names that deep learning has gone by.” A simple neural network in supervised learning takes input data, “adjusts” it by a sequence of linear operations that start out randomly, converts it to a predicted output, then repeats the loop over and over until it “learns” a set of adjustments that do a good job of mapping the input to the known output.

Deep learning adds complexity and scale to the process. A convolution is an operation with two functions instead of the single linear multiplications in each layer of a simple network. Goodfellow et al use location measurements as an example – instead of using a single long/lat measurement, you would use a weighted average of measurements over time, with measurements closer in time being accorded higher weights. A tensor is the multidimensional extension of a matrix (i.e. scalar > vector > matrix > tensor). Tensor operations and convolutions add complexity and hence power to neural networks, allowing them to do unsupervised feature extraction and develop category identities.

Alan Blair, our deep learning expert, can tell you much more in his May 11 Deep Learning course.