Casting back long before the advent of Deep Learning for the “founding fathers” of data science, at first glance you would rule out antecedents who long predate the computer and data revolutions of the last quarter century.

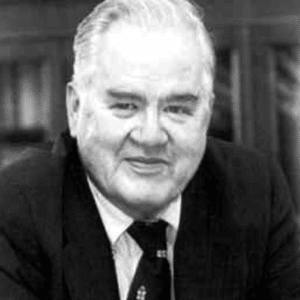

But some consider John Tukey (below), the Princeton statistician who named and developed the field of “exploratory data analysis,” a pioneer data scientist. During World War II, Tukey worked at the Fire Control Research Office in Princeton, NJ.

The office was concerned not with putting out fires, but with conducting mathematical and statistical research on targeting, aiming and ranging algorithms for artillery, rockets, aircraft weaponry, and the like. After the war, Tukey ended up teaching at Princeton.

His contributions to statistics were both methodological (e.g. tests that bear his name) and philosophical. Tukey approached statistics as the “science of learning from data.” This may seem unremarkable to us now, but, at the time, statistics had become a tightly constrained discipline. It dealt with with highly focused goals of drawing inference from data collected in experiments, via sometimes arcane and complex logical and mathematical procedures. Tukey popularized data analysis with a broad scientific mission of advancing knowledge, and he forged links to the engineering and computer science communities (he also coined the terms bit and software.)

Tukey’s seminal paper “The Future of Data Analysis” dates from 1962 and, at the time, of course, neither big data nor the personal computer revolution were at hand. The time of Deep Learning was still in the distant future (though our course starts Friday!). Nonetheless, Tukey’s orientation of “learning from data” presaged the data-centric approach that underpins today’s data science.